Sora shutdown feels bigger than one product disappearing. It tells you the AI market is starting to punish tools that look amazing but struggle to stay useful, safe, and worth the cost.

That is why this story matters now. One headline AI app is gone, one giant AI platform keeps growing while still fighting trust issues, and one chatbot already ran into government pressure.

If you are trying to understand what survives from here, this is the part worth watching. AI is not ending, but it is getting filtered.

A major AI video product got cut while OpenAI shifted attention toward coding, enterprise tools, robotics, and broader platform priorities.

OpenAI says ChatGPT now has more than 900 million weekly active users. That is not what a collapsing product looks like.

ChatGPT is huge, but source quality, overconfidence, and outages still shape how much people trust it.

Indonesia and Malaysia temporarily restricted Grok over explicit AI-generated image concerns. That turned product risk into policy risk fast.

The First Crack

Sora changed the vibe

Sora looked like the kind of product that should stay at the center of the AI conversation. It was visual, easy to understand, and built for instant attention. That made it powerful as a demo.

But demo power is not the same thing as product durability. Once hype fades, the hard questions show up. How costly is it to run, how risky is it to moderate, and how often do people actually keep using it?

That is why Sora matters beyond Sora. It became the cleanest example of a product that felt huge in public but weaker when judged like a business.

It felt immediate

Sora made AI video feel real in seconds. You did not need a long explanation to understand the appeal. That is rare, and it made the product easy to share.

It had to prove more

Video tools are expensive, easy to misuse, and harder to turn into repeat business value. Those problems are manageable during hype. They get much louder during reprioritization.

Why Disney Cared

The content bet

Disney’s interest made sense right away. Sora offered a way to test AI-generated short-form content using major characters in a more controlled setting. That made the partnership feel bigger than a normal feature deal.

For Disney, it opened a new format. For OpenAI, it added premium IP and commercial legitimacy. That is why the shutdown landed harder than a normal product update.

The nuance still matters here. The safer framing is not “Disney definitely lost a booked billion dollars.” The more accurate framing is that Disney lost a high-profile AI-video path before it had time to fully mature.

What Disney wanted

A controlled way to explore AI-generated entertainment with premium characters. A chance to test new formats without building the model itself. A front-row seat in a fast-moving space.

What changed

The platform disappeared before the path could settle. That made the loss feel strategic, not just financial. The product risk showed up first.

The Bigger Stake

Microsoft’s side

This is where fast summaries usually go wrong. Microsoft did not make a narrow public bet on Sora alone. Microsoft backed OpenAI broadly, which is a much bigger relationship.

That means Sora shutting down is not the same thing as “Microsoft’s AI bet failed.” It is more accurate to say one OpenAI product got cut while the larger model, cloud, and enterprise relationship stayed intact.

The real Microsoft question is not “did Sora die?” It is “can OpenAI keep turning scale into durable products that businesses keep paying for?”

| Question | Cleaner answer |

|---|---|

| Did Microsoft invest in Sora specifically? | No narrow public standalone bet is the right framing. Microsoft invested in OpenAI broadly. |

| Does the shutdown still matter? | Yes, but more as a product-economics signal than as a one-product financial disaster. |

| Why did Microsoft back OpenAI? | For Azure demand, model access, enterprise leverage, and long-term strategic position. |

| Where does Microsoft stand now? | The broader partnership still matters more than the loss of one AI video product. |

Cloud pull

Microsoft still benefits when OpenAI models and tools drive infrastructure usage. That core logic did not disappear with Sora. It is still central.

Execution risk

Microsoft is tied to OpenAI’s broader success, not just one product. That means the real exposure is about product durability over time. Sora is only one signal.

Showcase energy

One spectacular consumer-facing proof point is gone. That hurts narrative momentum. It does not break the whole thesis.

Google, But Chatty?

Why ChatGPT feels different

ChatGPT does not look like a product that is “going down” in the business sense. OpenAI says it now has more than 900 million weekly active users and more than 50 million consumer subscribers. That is a scale story, not a collapse story.

At the same time, calling it “the new Google” without nuance misses the real shift. ChatGPT is becoming a new starting point for discovery, especially when people want a quick answer before they want a list of links. That makes it a layer, not a replacement.

This is why model evolution matters so much here. Mock Certified’s look at ChatGPT upgrade differences and its comparison of GPT-4o vs GPT-4.5 both show how quickly user expectations change while the trust questions stay very much alive.

Where Trust Slips

The source problem

The trust issue is not just about whether AI can answer quickly. It is about whether the answer actually rests on the source the user thinks it does. That gap is what makes a polished answer feel safer than it may really be.

This is exactly where visibility changes shape. Reveation’s article on AEO for B2B marketing matters because AI systems increasingly reward content that is structured, clear, and easy to cite. The Smile Insider’s piece on GEO vs SEO supports the same shift from another angle.

That change did not happen overnight. Mock Certified’s earlier piece on ChatGPT Playground captures the early fascination stage well. What was once mostly novelty is now novelty plus trust plus governance plus economics.

Fast answers

People get fewer clicks, quicker summaries, and easier comparisons. That feels convenient immediately. It is one big reason AI discovery keeps growing.

Source confidence

AI can sound certain while flattening nuance or citing weakly. That makes errors feel more believable. The better the wording, the easier it is to miss the problem.

When Safety Hits Policy

Grok’s problem

Grok did not face a blanket global ban, and that detail matters. The verified version is more specific. Indonesia and Malaysia temporarily restricted access after backlash over explicit AI-generated image content.

That tells you something important about the market. Safety failures do not just create PR problems anymore. They can become policy problems when the issue feels visible and hard to defend.

The temporary nature of those restrictions matters too. It shows that this is often less about “forever banned” and more about how platforms get forced to tighten safeguards under pressure.

Indonesia

Indonesia temporarily blocked Grok after concerns over explicit AI-generated images. That made it the first country to deny access. It set the tone for the wider backlash.

Malaysia

Malaysia also temporarily restricted Grok over similar concerns. The message was clear. Safety controls can affect access itself.

What The Threads Say

Reddit gets loud

The Reddit threads are useful because they show the mood in plain language. They are not proof by themselves, but they do show how people are reading this moment. A lot of that mood comes down to cost, weak profitability, overhype, and fear that some current winners will not stay winners forever.

“One product dies, and people call the whole lab doomed.” That is where the threads usually overreach. A weak product and a strong platform can absolutely exist at the same time.

The crowd is not wrong to worry about cash burn and weak ROI. It just tends to jump too fast from “this is messy” to “everything is about to implode.” Markets are usually stranger than that.

What Reddit gets right

Costs are real, hype is real, and some AI products will not survive. That is not doom posting anymore. It is starting to look like market discipline.

Where Reddit goes too far

One weak product does not automatically prove platform collapse. Sora is a good example of that split. The product died, but the bigger platform story stayed alive.

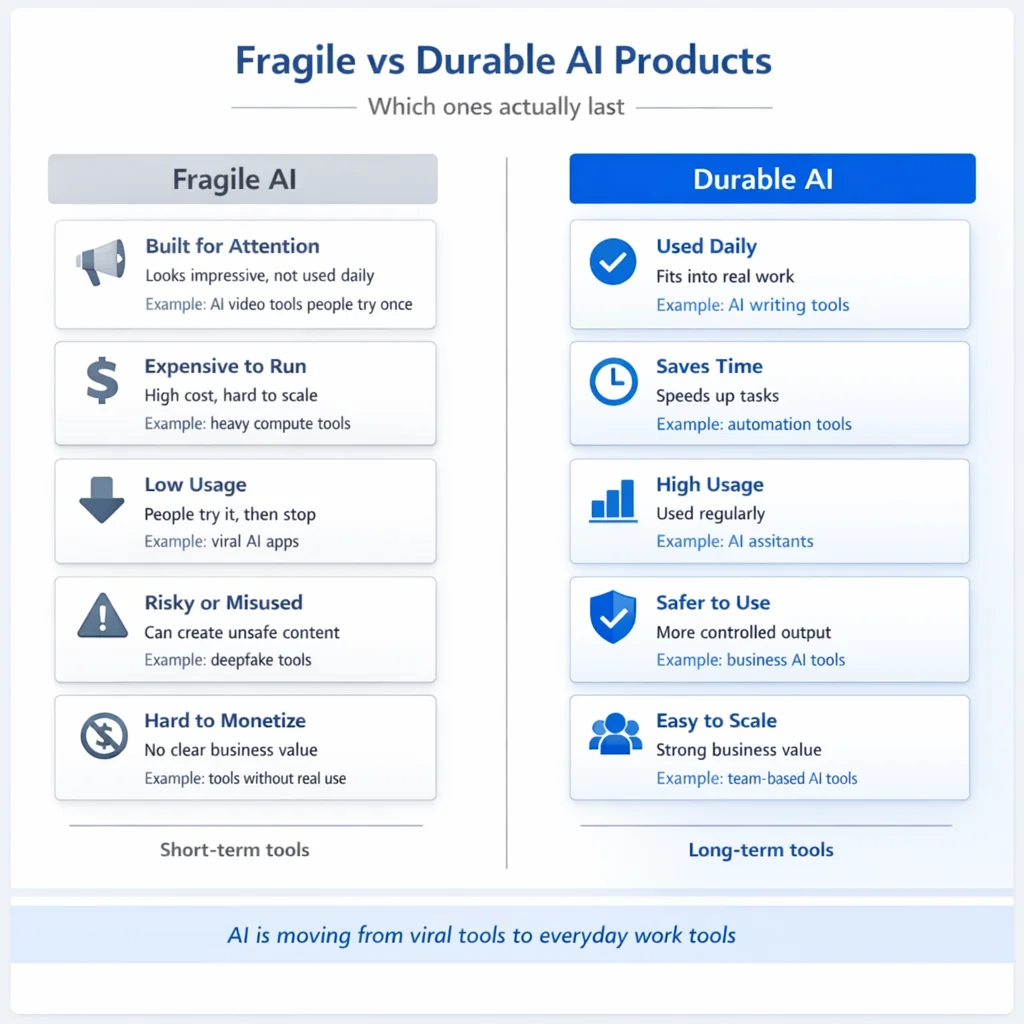

What Breaks First

The weak pattern

No honest blog can tell you the exact next AI platform that shuts down. What you can identify is the pattern that looks weakest. It usually combines high compute cost, shallow habit, weak business fit, and obvious safety or IP trouble.

That is why viral consumer AI looks more exposed than workflow AI right now. Products built on spectacle have to keep proving themselves every day. Products built into work get more chances to stay.

This is also where Mock Certified’s piece on the best LLM for coding becomes useful. Coding tools are boring in exactly the right way because they solve a repeat problem.

| Pattern | More fragile | More durable |

|---|---|---|

| Core usage | Novelty-driven | Daily workflow-driven |

| Cost profile | Heavy compute with weak payback | Measurable savings or speed |

| Trust profile | High misuse or moderation risk | Guardrailed and use-case specific |

| Retention | Curiosity spikes | Habit and dependency |

| Replacement risk | Easy to swap | Harder to remove from work |

Where Money Sticks

The safer side

The part of AI that still looks strongest is not the noisiest part. Enterprise workflow AI keeps making the most sense because it can save time, reduce labor, and fit directly into repeat tasks. Coding AI keeps looking strong for the same reason.

Infrastructure and inference also look safer than most flashy app-layer products. Every serious AI product depends on the layers underneath. That makes the boring stack more defensible than the shiny surface.

If you want a broader career and skills bridge, Mock Certified’s piece on artificial intelligence and data science fits here naturally. The real scope is not “all AI wins.” The real scope is where AI meets routine work and measurable value.

Workflow AI

Support, approvals, quoting, and operations are not glamorous. They are still where AI often creates the clearest measurable gain. That matters more than buzz.

Coding AI

Developer tools earn repeat use naturally. They fit work that already happens every day. That makes them easier to justify and harder to cut.

Pure spectacle

Products that mostly win on demos have to prove themselves again and again. That is a harder life than products tied to routine business value. Sora just showed why.

Conclusion

What to actually watch

Sora shutdown does not mean AI is breaking. It means the market is getting stricter about what counts as a real product. Expensive, risky, or weakly attached tools are under more pressure than tools tied to daily work.

Disney’s loss is mostly strategic. Microsoft’s risk is broad rather than Sora-specific, while ChatGPT is scaling hard and still carrying trust issues. Grok shows what happens when safety becomes a government issue instead of just a product issue.

That is the real headline hiding under all the noise. AI is not ending. It is sorting itself out.

FAQ

Why did Sora shut down?

Sora appears to have become harder to justify than the AI categories OpenAI now prioritizes. Compute cost, safety pressure, and weaker repeat workflow fit all seem to have mattered. That makes it a product-economics story, not a blanket statement about AI.

Did Disney lose money on Sora?

The safer framing is that Disney lost a strategic AI-video path. Reuters reported the deal never officially closed and no money changed hands. That is different from a simple “Disney lost a billion dollars” headline.

Did Microsoft invest in Sora specifically?

Not as a narrow standalone public bet. Microsoft invested in OpenAI broadly. That is why Sora’s shutdown matters more as a signal than as a one-product investment disaster.

Is ChatGPT going down?

As a service, it still has incidents and degraded periods. As a business, current evidence points the other way because OpenAI says usage keeps scaling sharply. Those are two very different meanings of “going down.”

Which countries restricted Grok?

Indonesia and Malaysia temporarily restricted Grok over explicit AI-generated image concerns. Those actions were temporary, but they showed how quickly safety issues can affect access. That is the bigger takeaway.

Which part of AI has the most scope now?

Enterprise workflow AI, coding tools, and infrastructure still look like the safest near-term bets. They solve repeat problems and create clearer measurable value. That usually beats pure spectacle over time.

Sources

- Reuters: OpenAI drops AI video tool Sora, startling Disney, sources say

- AP: OpenAI pulls the plug on Sora

- OpenAI: Scaling AI for everyone

- OpenAI Status

- Microsoft and OpenAI joint statement on continuing partnership

- Reuters: Indonesia temporarily blocks access to Grok

- Reuters: Malaysia restricts access to Grok AI

- Reuters: Indonesia lets Grok resume after safeguards

- Tow Center: AI search has a citation problem

- Investopedia: Why AI companies struggle financially

Reddit threads informed the mood section only. They were not used as primary factual sources.

“The economics look ugly.” That is the part Reddit gets right most often. A lot of AI products are expensive to run, and not every flashy demo turns into something people use every day.